Transformers documentation

Grounding DINO

Grounding DINO

개요

Grounding DINO 모델은 Shilong Liu, Zhaoyang Zeng, Tianhe Ren, Feng Li, Hao Zhang, Jie Yang, Chunyuan Li, Jianwei Yang, Hang Su, Jun Zhu, Lei Zhang이 Grounding DINO: Marrying DINO with Grounded Pre-Training for Open-Set Object Detection에서 제안한 모델입니다. Grounding DINO는 폐쇄형 객체 탐지 모델을 텍스트 인코더로 확장하여 개방형 객체 탐지를 가능하게 합니다. 이 모델은 COCO 제로샷에서 52.5 AP와 같은 놀라운 결과를 달성합니다.

논문의 초록은 다음과 같습니다:

본 논문에서는 트랜스포머 기반 탐지기 DINO를 기반 사전 학습과 결합하여 Grounding DINO라는 개방형 객체 탐지기를 제시합니다. 이는 카테고리 이름이나 참조 표현 등의 사용자 입력으로 임의의 객체를 탐지할 수 있습니다. 개방형 객체 탐지의 핵심 해결책은 개방형 개념 일반화를 위해 폐쇄형 탐지기에 언어를 도입하는 것입니다. 언어와 비전 모달리티를 효과적으로 융합하기 위해, 폐쇄형 탐지기를 개념적으로 세 단계로 나누어 특성 강화기, 언어 기반 쿼리 선택, 교차 모달리티 융합을 위한 교차 모달리티 디코더를 포함하는 긴밀한 융합 솔루션을 제안합니다. 이전 연구들이 주로 새로운 카테고리에 대한 개방형 객체 탐지를 평가한 반면, 우리는 속성으로 지정된 객체에 대한 참조 표현 이해에 대한 평가도 수행할 것을 제안합니다. Grounding DINO는 COCO, LVIS, ODinW, RefCOCO/+/g 벤치마크를 포함한 세 가지 설정 모두에서 놀라운 성능을 보입니다. Grounding DINO는 COCO 탐지 제로샷 전이 벤치마크에서 52.5 AP(Average Precision, 평균 정밀도)를 달성했습니다. 즉, COCO의 학습 데이터 없이도 이러한 성과를 얻었습니다. 평균 26.1 AP로 ODinW 제로샷 벤치마크에서 새로운 기록을 세웠습니다.

Grounding DINO 개요. 원본 논문에서 가져왔습니다.

Grounding DINO 개요. 원본 논문에서 가져왔습니다. 이 모델은 EduardoPacheco와 nielsr에 의해 기여되었습니다. 원본 코드는 여기에서 찾을 수 있습니다.

사용 팁

- GroundingDinoProcessor를 사용하여 모델을 위한 이미지-텍스트 쌍을 준비할 수 있습니다.

- 텍스트에서 클래스를 구분할 때는 마침표를 사용하세요. 예: “a cat. a dog.”

- 여러 클래스를 사용할 때(예:

"a cat. a dog."), GroundingDinoProcessor의post_process_grounded_object_detection을 사용해 출력을 후처리해야 합니다.post_process_object_detection에서 반환되는 레이블은 prob > threshold인 모델 차원의 인덱스를 나타내기 때문입니다.

다음은 제로샷 객체 탐지에 모델을 사용하는 방법입니다:

>>> import requests

>>> import torch

>>> from PIL import Image

>>> from transformers import AutoProcessor, AutoModelForZeroShotObjectDetection

>>> model_id = "IDEA-Research/grounding-dino-tiny"

>>> device = "cuda"

>>> processor = AutoProcessor.from_pretrained(model_id)

>>> model = AutoModelForZeroShotObjectDetection.from_pretrained(model_id).to(device)

>>> image_url = "http://images.cocodataset.org/val2017/000000039769.jpg"

>>> image = Image.open(requests.get(image_url, stream=True).raw)

>>> # 고양이와 리모컨 확인

>>> text_labels = [["a cat", "a remote control"]]

>>> inputs = processor(images=image, text=text_labels, return_tensors="pt").to(device)

>>> with torch.no_grad():

... outputs = model(**inputs)

>>> results = processor.post_process_grounded_object_detection(

... outputs,

... inputs.input_ids,

... box_threshold=0.4,

... text_threshold=0.3,

... target_sizes=[image.size[::-1]]

... )

# 첫 번째 이미지 결과 가져오기

>>> result = results[0]

>>> for box, score, labels in zip(result["boxes"], result["scores"], result["labels"]):

... box = [round(x, 2) for x in box.tolist()]

... print(f"Detected {labels} with confidence {round(score.item(), 3)} at location {box}")

Detected a cat with confidence 0.468 at location [344.78, 22.9, 637.3, 373.62]

Detected a cat with confidence 0.426 at location [11.74, 51.55, 316.51, 473.22]Grounded SAM

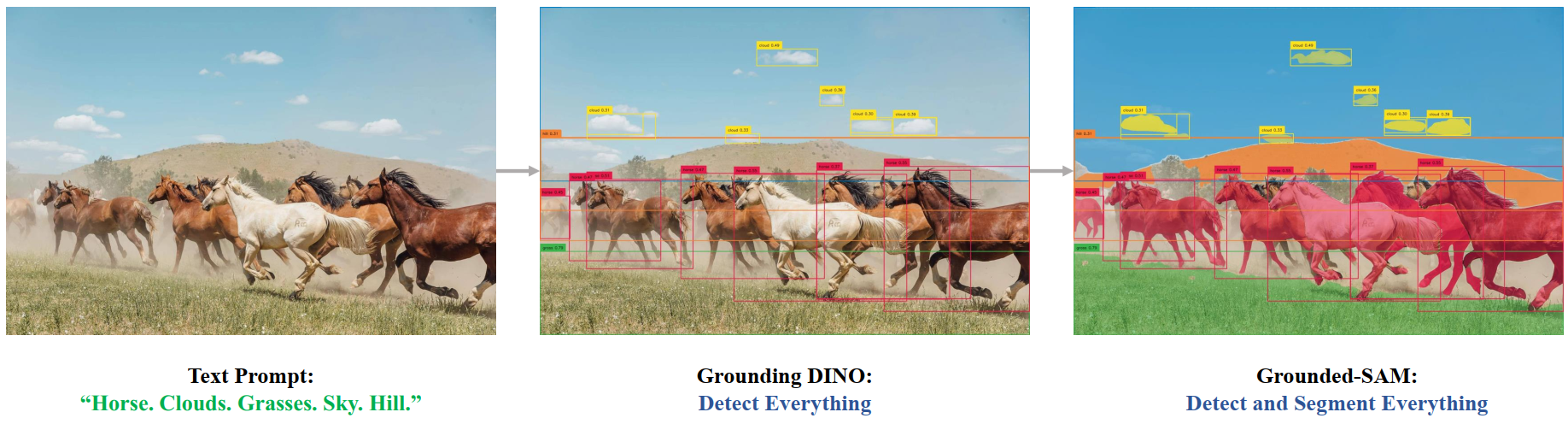

Grounded SAM: Assembling Open-World Models for Diverse Visual Tasks에서 소개된 대로 Grounding DINO를 Segment Anything 모델과 결합하여 텍스트 기반 마스크 생성을 할 수 있습니다. 자세한 내용은 이 데모 노트북 🌍을 참조하세요.

Grounded SAM 개요. 원본 저장소에서 가져왔습니다.

Grounded SAM 개요. 원본 저장소에서 가져왔습니다. 리소스

Grounding DINO를 시작하는 데 도움이 되는 공식 Hugging Face 및 커뮤니티(🌎로 표시) 리소스 목록입니다. 여기에 포함될 리소스를 제출하고 싶다면 Pull Request를 자유롭게 열어주세요. 검토해드리겠습니다! 리소스는 기존 리소스를 복제하는 대신 새로운 것을 보여주는 것이 이상적입니다.

GroundingDinoImageProcessor

class transformers.GroundingDinoImageProcessor

< source >( **kwargs: typing_extensions.Unpack[transformers.models.grounding_dino.image_processing_grounding_dino.GroundingDinoImageProcessorKwargs] )

Parameters

- format (

str, kwargs, optional, defaults toAnnotationFormat.COCO_DETECTION) — Data format of the annotations. One of “coco_detection” or “coco_panoptic”. - do_convert_annotations (

bool, kwargs, optional, defaults toTrue) — Controls whether to convert the annotations to the format expected by the GROUNDING_DINO model. Converts the bounding boxes to the format(center_x, center_y, width, height)and in the range[0, 1]. Can be overridden by thedo_convert_annotationsparameter in thepreprocessmethod. - **kwargs (

ImagesKwargs, optional) — Additional image preprocessing options. Model-specific kwargs are listed above; see the TypedDict class for the complete list of supported arguments.

Constructs a GroundingDinoImageProcessor image processor.

preprocess

< source >( images: typing.Union[ForwardRef('PIL.Image.Image'), numpy.ndarray, ForwardRef('torch.Tensor'), list['PIL.Image.Image'], list[numpy.ndarray], list['torch.Tensor']] annotations: dict[str, int | str | list[dict]] | list[dict[str, int | str | list[dict]]] | None = None return_segmentation_masks: bool | None = None masks_path: str | pathlib.Path | None = None **kwargs: typing_extensions.Unpack[transformers.models.grounding_dino.image_processing_grounding_dino.GroundingDinoImageProcessorKwargs] ) → ~image_processing_base.BatchFeature

Parameters

- images (

Union[PIL.Image.Image, numpy.ndarray, torch.Tensor, list[PIL.Image.Image], list[numpy.ndarray], list[torch.Tensor]]) — Image to preprocess. Expects a single or batch of images with pixel values ranging from 0 to 255. If passing in images with pixel values between 0 and 1, setdo_rescale=False. - annotations (

AnnotationTypeorlist[AnnotationType], optional) — Annotations to transform according to the padding that is applied to the images. - return_segmentation_masks (

bool, optional, defaults toself.return_segmentation_masks) — Whether to return segmentation masks. - masks_path (

strorpathlib.Path, optional) — Path to the directory containing the segmentation masks. - format (

str, kwargs, optional, defaults toAnnotationFormat.COCO_DETECTION) — Data format of the annotations. One of “coco_detection” or “coco_panoptic”. - do_convert_annotations (

bool, kwargs, optional, defaults toTrue) — Controls whether to convert the annotations to the format expected by the GROUNDING_DINO model. Converts the bounding boxes to the format(center_x, center_y, width, height)and in the range[0, 1]. Can be overridden by thedo_convert_annotationsparameter in thepreprocessmethod. - return_tensors (

stror TensorType, optional) — Returns stacked tensors if set to'pt', otherwise returns a list of tensors. - **kwargs (

ImagesKwargs, optional) — Additional image preprocessing options. Model-specific kwargs are listed above; see the TypedDict class for the complete list of supported arguments.

Returns

~image_processing_base.BatchFeature

- data (

dict) — Dictionary of lists/arrays/tensors returned by the call method (‘pixel_values’, etc.). - tensor_type (

Union[None, str, TensorType], optional) — You can give a tensor_type here to convert the lists of integers in PyTorch/Numpy Tensors at initialization.

GroundingDinoImageProcessorFast

class transformers.GroundingDinoImageProcessor

< source >( **kwargs: typing_extensions.Unpack[transformers.models.grounding_dino.image_processing_grounding_dino.GroundingDinoImageProcessorKwargs] )

Parameters

- format (

str, kwargs, optional, defaults toAnnotationFormat.COCO_DETECTION) — Data format of the annotations. One of “coco_detection” or “coco_panoptic”. - do_convert_annotations (

bool, kwargs, optional, defaults toTrue) — Controls whether to convert the annotations to the format expected by the GROUNDING_DINO model. Converts the bounding boxes to the format(center_x, center_y, width, height)and in the range[0, 1]. Can be overridden by thedo_convert_annotationsparameter in thepreprocessmethod. - **kwargs (

ImagesKwargs, optional) — Additional image preprocessing options. Model-specific kwargs are listed above; see the TypedDict class for the complete list of supported arguments.

Constructs a GroundingDinoImageProcessor image processor.

preprocess

< source >( images: typing.Union[ForwardRef('PIL.Image.Image'), numpy.ndarray, ForwardRef('torch.Tensor'), list['PIL.Image.Image'], list[numpy.ndarray], list['torch.Tensor']] annotations: dict[str, int | str | list[dict]] | list[dict[str, int | str | list[dict]]] | None = None return_segmentation_masks: bool | None = None masks_path: str | pathlib.Path | None = None **kwargs: typing_extensions.Unpack[transformers.models.grounding_dino.image_processing_grounding_dino.GroundingDinoImageProcessorKwargs] ) → ~image_processing_base.BatchFeature

Parameters

- images (

Union[PIL.Image.Image, numpy.ndarray, torch.Tensor, list[PIL.Image.Image], list[numpy.ndarray], list[torch.Tensor]]) — Image to preprocess. Expects a single or batch of images with pixel values ranging from 0 to 255. If passing in images with pixel values between 0 and 1, setdo_rescale=False. - annotations (

AnnotationTypeorlist[AnnotationType], optional) — Annotations to transform according to the padding that is applied to the images. - return_segmentation_masks (

bool, optional, defaults toself.return_segmentation_masks) — Whether to return segmentation masks. - masks_path (

strorpathlib.Path, optional) — Path to the directory containing the segmentation masks. - format (

str, kwargs, optional, defaults toAnnotationFormat.COCO_DETECTION) — Data format of the annotations. One of “coco_detection” or “coco_panoptic”. - do_convert_annotations (

bool, kwargs, optional, defaults toTrue) — Controls whether to convert the annotations to the format expected by the GROUNDING_DINO model. Converts the bounding boxes to the format(center_x, center_y, width, height)and in the range[0, 1]. Can be overridden by thedo_convert_annotationsparameter in thepreprocessmethod. - return_tensors (

stror TensorType, optional) — Returns stacked tensors if set to'pt', otherwise returns a list of tensors. - **kwargs (

ImagesKwargs, optional) — Additional image preprocessing options. Model-specific kwargs are listed above; see the TypedDict class for the complete list of supported arguments.

Returns

~image_processing_base.BatchFeature

- data (

dict) — Dictionary of lists/arrays/tensors returned by the call method (‘pixel_values’, etc.). - tensor_type (

Union[None, str, TensorType], optional) — You can give a tensor_type here to convert the lists of integers in PyTorch/Numpy Tensors at initialization.

post_process_object_detection

< source >( outputs: GroundingDinoObjectDetectionOutput threshold: float = 0.1 target_sizes: transformers.utils.generic.TensorType | list[tuple] | None = None ) → list[Dict]

Parameters

- outputs (

GroundingDinoObjectDetectionOutput) — Raw outputs of the model. - threshold (

float, optional, defaults to 0.1) — Score threshold to keep object detection predictions. - target_sizes (

torch.Tensororlist[tuple[int, int]], optional) — Tensor of shape(batch_size, 2)or list of tuples (tuple[int, int]) containing the target size(height, width)of each image in the batch. If unset, predictions will not be resized.

Returns

list[Dict]

A list of dictionaries, each dictionary containing the following keys:

- “scores”: The confidence scores for each predicted box on the image.

- “labels”: Indexes of the classes predicted by the model on the image.

- “boxes”: Image bounding boxes in (top_left_x, top_left_y, bottom_right_x, bottom_right_y) format.

Converts the raw output of GroundingDinoForObjectDetection into final bounding boxes in (top_left_x, top_left_y, bottom_right_x, bottom_right_y) format.

GroundingDinoProcessor

class transformers.GroundingDinoProcessor

< source >( image_processor tokenizer )

Constructs a GroundingDinoProcessor which wraps a image processor and a tokenizer into a single processor.

GroundingDinoProcessor offers all the functionalities of GroundingDinoImageProcessor and BertTokenizer. See the ~GroundingDinoImageProcessor and ~BertTokenizer for more information.

post_process_grounded_object_detection

< source >( outputs: GroundingDinoObjectDetectionOutput input_ids: transformers.utils.generic.TensorType | None = None threshold: float = 0.25 text_threshold: float = 0.25 target_sizes: transformers.utils.generic.TensorType | list[tuple] | None = None text_labels: list[list[str]] | None = None ) → list[Dict]

Parameters

- outputs (

GroundingDinoObjectDetectionOutput) — Raw outputs of the model. - input_ids (

torch.LongTensorof shape(batch_size, sequence_length), optional) — The token ids of the input text. If not provided will be taken from the model output. - threshold (

float, optional, defaults to 0.25) — Threshold to keep object detection predictions based on confidence score. - text_threshold (

float, optional, defaults to 0.25) — Score threshold to keep text detection predictions. - target_sizes (

torch.Tensororlist[tuple[int, int]], optional) — Tensor of shape(batch_size, 2)or list of tuples (tuple[int, int]) containing the target size(height, width)of each image in the batch. If unset, predictions will not be resized. - text_labels (

list[list[str]], optional) — List of candidate labels to be detected on each image. At the moment it’s NOT used, but required to be in signature for the zero-shot object detection pipeline. Text labels are instead extracted from theinput_idstensor provided inoutputs.

Returns

list[Dict]

A list of dictionaries, each dictionary containing the

- scores: tensor of confidence scores for detected objects

- boxes: tensor of bounding boxes in [x0, y0, x1, y1] format

- labels: list of text labels for each detected object (will be replaced with integer ids in v4.51.0)

- text_labels: list of text labels for detected objects

Converts the raw output of GroundingDinoForObjectDetection into final bounding boxes in (top_left_x, top_left_y, bottom_right_x, bottom_right_y) format and get the associated text label.

GroundingDinoConfig

class transformers.GroundingDinoConfig

< source >( transformers_version: str | None = None architectures: list[str] | None = None output_hidden_states: bool | None = False return_dict: bool | None = True dtype: typing.Union[str, ForwardRef('torch.dtype'), NoneType] = None chunk_size_feed_forward: int = 0 id2label: dict[int, str] | dict[str, str] | None = None label2id: dict[str, int] | dict[str, str] | None = None problem_type: typing.Optional[typing.Literal['regression', 'single_label_classification', 'multi_label_classification']] = None is_encoder_decoder: bool = True backbone_config: dict | transformers.configuration_utils.PreTrainedConfig | None = None text_config: dict | transformers.configuration_utils.PreTrainedConfig | None = None num_queries: int = 900 encoder_layers: int = 6 encoder_ffn_dim: int = 2048 encoder_attention_heads: int = 8 decoder_layers: int = 6 decoder_ffn_dim: int = 2048 decoder_attention_heads: int = 8 activation_function: str = 'relu' d_model: int = 256 dropout: float | int = 0.1 attention_dropout: float | int = 0.0 activation_dropout: float | int = 0.0 auxiliary_loss: bool = False position_embedding_type: str = 'sine' num_feature_levels: int = 4 encoder_n_points: int = 4 decoder_n_points: int = 4 two_stage: bool = True class_cost: float = 1.0 bbox_cost: float = 5.0 giou_cost: float = 2.0 bbox_loss_coefficient: float = 5.0 giou_loss_coefficient: float = 2.0 focal_alpha: float = 0.25 disable_custom_kernels: bool = False max_text_len: int = 256 text_enhancer_dropout: float | int = 0.0 fusion_droppath: float | int = 0.1 fusion_dropout: float | int = 0.0 embedding_init_target: bool = True query_dim: int = 4 decoder_bbox_embed_share: bool = True two_stage_bbox_embed_share: bool = False positional_embedding_temperature: int = 20 init_std: float = 0.02 layer_norm_eps: float = 1e-05 tie_word_embeddings: bool = True )

Parameters

- is_encoder_decoder (

bool, optional, defaults toTrue) — Whether the model is used as an encoder/decoder or not. - backbone_config (

Union[dict, ~configuration_utils.PreTrainedConfig], optional) — The configuration of the backbone model. - text_config (

Union[dict, ~configuration_utils.PreTrainedConfig], optional) — The config object or dictionary of the text backbone. - num_queries (

int, optional, defaults to 900) — Number of object queries, i.e. detection slots. This is the maximal number of objects GroundingDinoModel can detect in a single image. - encoder_layers (

int, optional, defaults to6) — Number of hidden layers in the Transformer encoder. Will use the same value asnum_layersif not set. - encoder_ffn_dim (

int, optional, defaults to2048) — Dimensionality of the “intermediate” (often named feed-forward) layer in encoder. - encoder_attention_heads (

int, optional, defaults to8) — Number of attention heads for each attention layer in the Transformer encoder. - decoder_layers (

int, optional, defaults to6) — Number of hidden layers in the Transformer decoder. Will use the same value asnum_layersif not set. - decoder_ffn_dim (

int, optional, defaults to2048) — Dimensionality of the “intermediate” (often named feed-forward) layer in decoder. - decoder_attention_heads (

int, optional, defaults to8) — Number of attention heads for each attention layer in the Transformer decoder. - activation_function (

str, optional, defaults torelu) — The non-linear activation function (function or string) in the decoder. For example,"gelu","relu","silu", etc. - d_model (

int, optional, defaults to256) — Size of the encoder layers and the pooler layer. - dropout (

Union[float, int], optional, defaults to0.1) — The ratio for all dropout layers. - attention_dropout (

Union[float, int], optional, defaults to0.0) — The dropout ratio for the attention probabilities. - activation_dropout (

Union[float, int], optional, defaults to0.0) — The dropout ratio for activations inside the fully connected layer. - auxiliary_loss (

bool, optional, defaults toFalse) — Whether auxiliary decoding losses (losses at each decoder layer) are to be used. - position_embedding_type (

str, optional, defaults to"sine") — Type of position embeddings to be used on top of the image features. One of"sine"or"learned". - num_feature_levels (

int, optional, defaults to 4) — The number of input feature levels. - encoder_n_points (

int, optional, defaults to 4) — The number of sampled keys in each feature level for each attention head in the encoder. - decoder_n_points (

int, optional, defaults to 4) — The number of sampled keys in each feature level for each attention head in the decoder. - two_stage (

bool, optional, defaults toTrue) — Whether to apply a two-stage deformable DETR, where the region proposals are also generated by a variant of Grounding DINO, which are further fed into the decoder for iterative bounding box refinement. - class_cost (

float, optional, defaults to1.0) — Relative weight of the classification error in the Hungarian matching cost. - bbox_cost (

float, optional, defaults to5.0) — Relative weight of the L1 bounding box error in the Hungarian matching cost. - giou_cost (

float, optional, defaults to2.0) — Relative weight of the generalized IoU loss in the Hungarian matching cost. - bbox_loss_coefficient (

float, optional, defaults to5.0) — Relative weight of the L1 bounding box loss in the panoptic segmentation loss. - giou_loss_coefficient (

float, optional, defaults to2.0) — Relative weight of the generalized IoU loss in the panoptic segmentation loss. - focal_alpha (

float, optional, defaults to0.25) — Alpha parameter in the focal loss. - disable_custom_kernels (

bool, optional, defaults toFalse) — Disable the use of custom CUDA and CPU kernels. This option is necessary for the ONNX export, as custom kernels are not supported by PyTorch ONNX export. - max_text_len (

int, optional, defaults to 256) — The maximum length of the text input. - text_enhancer_dropout (

float, optional, defaults to 0.0) — The dropout ratio for the text enhancer. - fusion_droppath (

float, optional, defaults to 0.1) — The droppath ratio for the fusion module. - fusion_dropout (

float, optional, defaults to 0.0) — The dropout ratio for the fusion module. - embedding_init_target (

bool, optional, defaults toTrue) — Whether to initialize the target with Embedding weights. - query_dim (

int, optional, defaults to 4) — The dimension of the query vector. - decoder_bbox_embed_share (

bool, optional, defaults toTrue) — Whether to share the bbox regression head for all decoder layers. - two_stage_bbox_embed_share (

bool, optional, defaults toFalse) — Whether to share the bbox embedding between the two-stage bbox generator and the region proposal generation. - positional_embedding_temperature (

float, optional, defaults to 20) — The temperature for Sine Positional Embedding that is used together with vision backbone. - init_std (

float, optional, defaults to0.02) — The standard deviation of the truncated_normal_initializer for initializing all weight matrices. - layer_norm_eps (

float, optional, defaults to1e-05) — The epsilon used by the layer normalization layers. - tie_word_embeddings (

bool, optional, defaults toTrue) — Whether to tie weight embeddings according to model’stied_weights_keysmapping.

This is the configuration class to store the configuration of a Grounding DinoModel. It is used to instantiate a Grounding Dino model according to the specified arguments, defining the model architecture. Instantiating a configuration with the defaults will yield a similar configuration to that of the IDEA-Research/grounding-dino-tiny

Configuration objects inherit from PreTrainedConfig and can be used to control the model outputs. Read the documentation from PreTrainedConfig for more information.

Examples:

>>> from transformers import GroundingDinoConfig, GroundingDinoModel

>>> # Initializing a Grounding DINO IDEA-Research/grounding-dino-tiny style configuration

>>> configuration = GroundingDinoConfig()

>>> # Initializing a model (with random weights) from the IDEA-Research/grounding-dino-tiny style configuration

>>> model = GroundingDinoModel(configuration)

>>> # Accessing the model configuration

>>> configuration = model.configGroundingDinoModel

class transformers.GroundingDinoModel

< source >( config: GroundingDinoConfig )

Parameters

- config (GroundingDinoConfig) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

The bare Grounding DINO Model (consisting of a backbone and encoder-decoder Transformer) outputting raw hidden-states without any specific head on top.

This model inherits from PreTrainedModel. Check the superclass documentation for the generic methods the library implements for all its model (such as downloading or saving, resizing the input embeddings, pruning heads etc.)

This model is also a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >( pixel_values: Tensor input_ids: Tensor token_type_ids: torch.Tensor | None = None attention_mask: torch.Tensor | None = None pixel_mask: torch.Tensor | None = None encoder_outputs = None output_attentions = None output_hidden_states = None return_dict = None **kwargs ) → GroundingDinoModelOutput or tuple(torch.FloatTensor)

Parameters

- pixel_values (

torch.Tensorof shape(batch_size, num_channels, image_size, image_size)) — The tensors corresponding to the input images. Pixel values can be obtained using GroundingDinoImageProcessor. SeeGroundingDinoImageProcessor.__call__()for details (GroundingDinoProcessor uses GroundingDinoImageProcessor for processing images). - input_ids (

torch.LongTensorof shape(batch_size, text_sequence_length)) — Indices of input sequence tokens in the vocabulary. Padding will be ignored by default should you provide it.Indices can be obtained using AutoTokenizer. See BertTokenizer.call() for details.

- token_type_ids (

torch.LongTensorof shape(batch_size, text_sequence_length), optional) — Segment token indices to indicate first and second portions of the inputs. Indices are selected in[0, 1]: 0 corresponds to asentence Atoken, 1 corresponds to asentence Btoken - attention_mask (

torch.Tensorof shape(batch_size, sequence_length), optional) — Mask to avoid performing attention on padding token indices. Mask values selected in[0, 1]:- 1 for tokens that are not masked,

- 0 for tokens that are masked.

- pixel_mask (

torch.Tensorof shape(batch_size, height, width), optional) — Mask to avoid performing attention on padding pixel values. Mask values selected in[0, 1]:- 1 for pixels that are real (i.e. not masked),

- 0 for pixels that are padding (i.e. masked).

- encoder_outputs (`

) -- Tuple consists of (last_hidden_state, *optional*:hidden_states, *optional*:attentions)last_hidden_stateof shape(batch_size, sequence_length, hidden_size)`, optional) is a sequence of hidden-states at the output of the last layer of the encoder. Used in the cross-attention of the decoder. - output_attentions (`

) -- Whether or not to return the attentions tensors of all attention layers. Seeattentions` under returned tensors for more detail. - output_hidden_states (`

) -- Whether or not to return the hidden states of all layers. Seehidden_states` under returned tensors for more detail. - return_dict (“) — Whether or not to return a ModelOutput instead of a plain tuple.

Returns

GroundingDinoModelOutput or tuple(torch.FloatTensor)

A GroundingDinoModelOutput or a tuple of

torch.FloatTensor (if return_dict=False is passed or when config.return_dict=False) comprising various

elements depending on the configuration (GroundingDinoConfig) and inputs.

The GroundingDinoModel forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the

Moduleinstance afterwards instead of this since the former takes care of running the pre and post processing steps while the latter silently ignores them.

last_hidden_state (

torch.FloatTensorof shape(batch_size, num_queries, hidden_size)) — Sequence of hidden-states at the output of the last layer of the decoder of the model.init_reference_points (

torch.FloatTensorof shape(batch_size, num_queries, 4)) — Initial reference points sent through the Transformer decoder.intermediate_hidden_states (

torch.FloatTensorof shape(batch_size, config.decoder_layers, num_queries, hidden_size)) — Stacked intermediate hidden states (output of each layer of the decoder).intermediate_reference_points (

torch.FloatTensorof shape(batch_size, config.decoder_layers, num_queries, 4)) — Stacked intermediate reference points (reference points of each layer of the decoder).decoder_hidden_states (

tuple[torch.FloatTensor], optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the embeddings, if the model has an embedding layer, + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size).Hidden-states of the decoder at the output of each layer plus the initial embedding outputs.

decoder_attentions (

tuple[tuple[torch.FloatTensor]], optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple oftorch.FloatTensor(one for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length).Attentions weights of the decoder, after the attention softmax, used to compute the weighted average in the self-attention heads.

encoder_last_hidden_state_vision (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional) — Sequence of hidden-states at the output of the last layer of the encoder of the model.encoder_last_hidden_state_text (

torch.FloatTensorof shape(batch_size, sequence_length, hidden_size), optional) — Sequence of hidden-states at the output of the last layer of the encoder of the model.encoder_vision_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the vision embeddings + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size). Hidden-states of the vision encoder at the output of each layer plus the initial embedding outputs.encoder_text_hidden_states (

tuple(torch.FloatTensor), optional, returned whenoutput_hidden_states=Trueis passed or whenconfig.output_hidden_states=True) — Tuple oftorch.FloatTensor(one for the output of the text embeddings + one for the output of each layer) of shape(batch_size, sequence_length, hidden_size). Hidden-states of the text encoder at the output of each layer plus the initial embedding outputs.encoder_attentions (

tuple(tuple(torch.FloatTensor)), optional, returned whenoutput_attentions=Trueis passed or whenconfig.output_attentions=True) — Tuple of tuples oftorch.FloatTensor(one for attention for each layer) of shape(batch_size, num_heads, sequence_length, sequence_length). Attentions weights after the attention softmax, used to compute the weighted average in the text-vision attention, vision-text attention, text-enhancer (self-attention) and multi-scale deformable attention heads. attention softmax, used to compute the weighted average in the bi-attention heads.enc_outputs_class (

torch.FloatTensorof shape(batch_size, sequence_length, config.num_labels), optional, returned whenconfig.two_stage=True) — Predicted bounding boxes scores where the topconfig.num_queriesscoring bounding boxes are picked as region proposals in the first stage. Output of bounding box binary classification (i.e. foreground and background).enc_outputs_coord_logits (

torch.FloatTensorof shape(batch_size, sequence_length, 4), optional, returned whenconfig.two_stage=True) — Logits of predicted bounding boxes coordinates in the first stage.encoder_logits (

torch.FloatTensorof shape(batch_size, sequence_length, config.num_labels), optional, returned whenconfig.two_stage=True) — Logits of topconfig.num_queriesscoring bounding boxes in the first stage.encoder_pred_boxes (

torch.FloatTensorof shape(batch_size, sequence_length, 4), optional, returned whenconfig.two_stage=True) — Coordinates of topconfig.num_queriesscoring bounding boxes in the first stage.

Examples:

>>> from transformers import AutoProcessor, AutoModel

>>> from PIL import Image

>>> import httpx

>>> from io import BytesIO

>>> url = "http://images.cocodataset.org/val2017/000000039769.jpg"

>>> with httpx.stream("GET", url) as response:

... image = Image.open(BytesIO(response.read()))

>>> text = "a cat."

>>> processor = AutoProcessor.from_pretrained("IDEA-Research/grounding-dino-tiny")

>>> model = AutoModel.from_pretrained("IDEA-Research/grounding-dino-tiny")

>>> inputs = processor(images=image, text=text, return_tensors="pt")

>>> outputs = model(**inputs)

>>> last_hidden_states = outputs.last_hidden_state

>>> list(last_hidden_states.shape)

[1, 900, 256]GroundingDinoForObjectDetection

class transformers.GroundingDinoForObjectDetection

< source >( config: GroundingDinoConfig )

Parameters

- config (GroundingDinoConfig) — Model configuration class with all the parameters of the model. Initializing with a config file does not load the weights associated with the model, only the configuration. Check out the from_pretrained() method to load the model weights.

Grounding DINO Model (consisting of a backbone and encoder-decoder Transformer) with object detection heads on top, for tasks such as COCO detection.

This model inherits from PreTrainedModel. Check the superclass documentation for the generic methods the library implements for all its model (such as downloading or saving, resizing the input embeddings, pruning heads etc.)

This model is also a PyTorch torch.nn.Module subclass. Use it as a regular PyTorch Module and refer to the PyTorch documentation for all matter related to general usage and behavior.

forward

< source >( pixel_values: FloatTensor input_ids: LongTensor token_type_ids: torch.LongTensor | None = None attention_mask: torch.LongTensor | None = None pixel_mask: torch.BoolTensor | None = None encoder_outputs: transformers.models.grounding_dino.modeling_grounding_dino.GroundingDinoEncoderOutput | tuple | None = None output_attentions: bool | None = None output_hidden_states: bool | None = None return_dict: bool | None = None labels: list[dict[str, torch.LongTensor | torch.FloatTensor]] | None = None **kwargs )

Parameters

- pixel_values (

torch.FloatTensorof shape(batch_size, num_channels, image_size, image_size)) — The tensors corresponding to the input images. Pixel values can be obtained using GroundingDinoImageProcessor. SeeGroundingDinoImageProcessor.__call__()for details (GroundingDinoProcessor uses GroundingDinoImageProcessor for processing images). - input_ids (

torch.LongTensorof shape(batch_size, text_sequence_length)) — Indices of input sequence tokens in the vocabulary. Padding will be ignored by default should you provide it.Indices can be obtained using AutoTokenizer. See BertTokenizer.call() for details.

- token_type_ids (

torch.LongTensorof shape(batch_size, text_sequence_length), optional) — Segment token indices to indicate first and second portions of the inputs. Indices are selected in[0, 1]: 0 corresponds to asentence Atoken, 1 corresponds to asentence Btoken - attention_mask (

torch.LongTensorof shape(batch_size, sequence_length), optional) — Mask to avoid performing attention on padding token indices. Mask values selected in[0, 1]:- 1 for tokens that are not masked,

- 0 for tokens that are masked.

- pixel_mask (

torch.BoolTensorof shape(batch_size, height, width), optional) — Mask to avoid performing attention on padding pixel values. Mask values selected in[0, 1]:- 1 for pixels that are real (i.e. not masked),

- 0 for pixels that are padding (i.e. masked).

- encoder_outputs (

Union[~models.grounding_dino.modeling_grounding_dino.GroundingDinoEncoderOutput, tuple], optional) — Tuple consists of (last_hidden_state, optional:hidden_states, optional:attentions)last_hidden_stateof shape(batch_size, sequence_length, hidden_size), optional) is a sequence of hidden-states at the output of the last layer of the encoder. Used in the cross-attention of the decoder. - output_attentions (

bool, optional) — Whether or not to return the attentions tensors of all attention layers. Seeattentionsunder returned tensors for more detail. - output_hidden_states (

bool, optional) — Whether or not to return the hidden states of all layers. Seehidden_statesunder returned tensors for more detail. - return_dict (

bool, optional) — Whether or not to return a ModelOutput instead of a plain tuple. - labels (

list[Dict]of len(batch_size,), optional) — Labels for computing the bipartite matching loss. List of dicts, each dictionary containing at least the following 2 keys: ‘class_labels’ and ‘boxes’ (the class labels and bounding boxes of an image in the batch respectively). The class labels themselves should be atorch.LongTensorof len(number of bounding boxes in the image,)and the boxes atorch.FloatTensorof shape(number of bounding boxes in the image, 4).

The GroundingDinoForObjectDetection forward method, overrides the __call__ special method.

Although the recipe for forward pass needs to be defined within this function, one should call the

Moduleinstance afterwards instead of this since the former takes care of running the pre and post processing steps while the latter silently ignores them.

Examples:

>>> import httpx

>>> from io import BytesIO

>>> import torch

>>> from PIL import Image

>>> from transformers import AutoProcessor, AutoModelForZeroShotObjectDetection

>>> model_id = "IDEA-Research/grounding-dino-tiny"

>>> device = "cuda"

>>> processor = AutoProcessor.from_pretrained(model_id)

>>> model = AutoModelForZeroShotObjectDetection.from_pretrained(model_id).to(device)

>>> url = "http://images.cocodataset.org/val2017/000000039769.jpg"

>>> with httpx.stream("GET", url) as response:

... image = Image.open(BytesIO(response.read()))

>>> # Check for cats and remote controls

>>> text_labels = [["a cat", "a remote control"]]

>>> inputs = processor(images=image, text=text_labels, return_tensors="pt").to(device)

>>> with torch.no_grad():

... outputs = model(**inputs)

>>> results = processor.post_process_grounded_object_detection(

... outputs,

... threshold=0.4,

... text_threshold=0.3,

... target_sizes=[(image.height, image.width)]

... )

>>> # Retrieve the first image result

>>> result = results[0]

>>> for box, score, text_label in zip(result["boxes"], result["scores"], result["text_labels"]):

... box = [round(x, 2) for x in box.tolist()]

... print(f"Detected {text_label} with confidence {round(score.item(), 3)} at location {box}")

Detected a cat with confidence 0.479 at location [344.7, 23.11, 637.18, 374.28]

Detected a cat with confidence 0.438 at location [12.27, 51.91, 316.86, 472.44]

Detected a remote control with confidence 0.478 at location [38.57, 70.0, 176.78, 118.18]