U-MARVEL: Unveiling Key Factors for Universal Multimodal Retrieval via Embedding Learning with MLLMs

This repository contains the official model checkpoints and inference code for U-MARVEL, presented in the paper U-MARVEL: Unveiling Key Factors for Universal Multimodal Retrieval via Embedding Learning with MLLMs.

Universal multimodal retrieval (UMR) addresses complex retrieval tasks involving diverse modalities for both queries and candidates. Despite the success of state-of-the-art methods based on multimodal large language models (MLLMs) using contrastive learning principles, the mechanisms underlying their retrieval capabilities remain largely unexplored. This gap potentially leads to suboptimal performance and limited generalization ability.

In this study, we systematically analyze the key factors driving effective embedding learning for UMR using MLLMs. We implement a general MLLM-based embedding learning pipeline and investigate contributors to high-performing universal retrieval systems. Our analysis covers various aspects of embedding generation and training strategies, including progressive transition, hard negative mining, and re-ranker distillation. Our findings reveal that often-overlooked factors can significantly impact model performance.

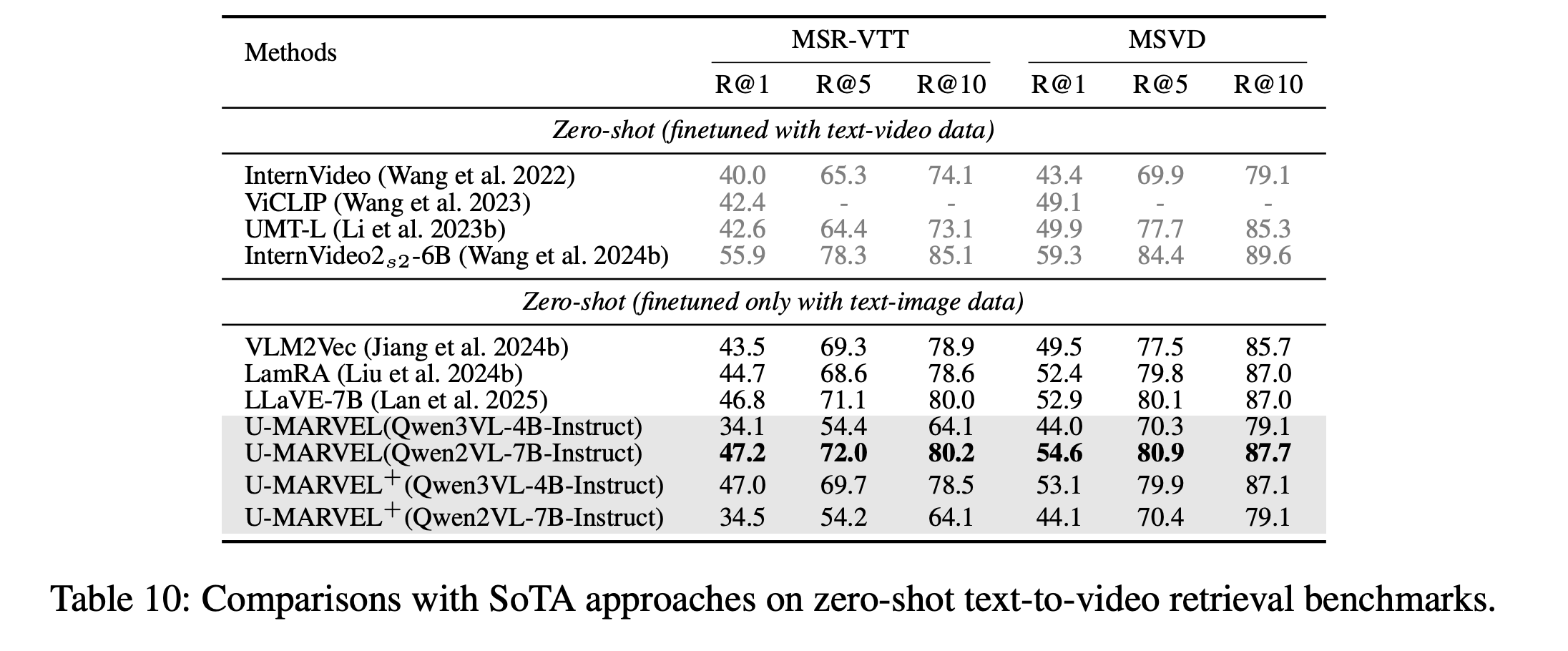

Building on these insights, we introduce U-MARVEL (Universal Multimodal Retrieval via Embedding Learning), a unified framework that outperforms state-of-the-art competitors on the M-BEIR benchmark in supervised settings and demonstrates strong zero-shot performance on tasks such as composed image retrieval and text-to-video retrieval. These results highlight the generalization potential of our framework across various embedding-based retrieval tasks, providing valuable insights for future research.

Model Checkpoints

├── checkpoints

│ ├── hf_models

│ │ └── Qwen2-VL-7B-Instruct

│ │ └── Qwen3-VL-4B-Instruct

│ └── U-MARVEL-Qwen2VL-7B-Instruct

│ └── U-MARVEL-Qwen3VL-4B-Instruct

- U-MARVEL-Qwen2VL-7B-Instruct 🤗

- U-MARVEL-Qwen3VL-4B-Instruct 🤗

- Code available at: U-MARVEL

🚀 Demo

To get started, first create a virtual environment and install the required dependencies:

conda create -n u-marvel python=3.9 -y

conda activate u-marvel

pip install -r requirements_qwen3_vl.txt

python demo.py

Model Performance

The proposed U-MARVEL framework establishes new state-of-the-art performance across both single-model architectures and recall-then-rerank approaches on M-BEIR benchmark.

Acknowledgements

Many thanks to the code bases from LamRA .

Citation

If you use this code for your research or project, please cite:

@inproceedings{li2026umarvel,

title={U-{MARVEL}: Unveiling Key Factors for Universal Multimodal Retrieval via Embedding Learning with {MLLM}s},

author={Xiaojie Li and Chu Li and Shi-Zhe Chen and Xi Chen},

booktitle={The Fourteenth International Conference on Learning Representations},

year={2026}

}

- Downloads last month

- 19

Model tree for TencentBAC/U-MARVEL-Qwen3VL-4B-Instruct

Base model

Qwen/Qwen3-VL-4B-Instruct